Alex Holcombe agues that if academics learn how to code and post their code, replication can become routine instead of a heroic painstaking effort.

The post is especially timely following the publication last week of a paper in Science documenting the difficulty in replicating published psychology studies

Contact: alex.holcombe@sydney.edu.au or Twitter @ceptional

A published article is only a summary description of the work that was done and also only a summary of the data that was collected. Making the published article open access is important, but is only a start towards opening up the research described by the article.

Openness is fundamental to science, because scientific results should be verifiable. For each result, at least one of two possible verification approaches ought to be made viable. One is scrutinizing every step of the research process that yielded the new finding, looking for errors or misleading conclusions. The second approach is to repeat the research.

The two approaches are linked, in that both require full disclosure of the research process. The research can only be judged error-free if every step of the research is available to be scrutinized. This information is also what’s needed to repeat the research. I will use the term reproducible research for this high standard of full publication that we should be aspiring to.

Figure 1 Via the Center for Open Science, a community of researchers (including myself) have developed badges to indicate that the components of a scientific report needed for reproducibility are available. The badges can then be awarded to qualified papers by journals.

Unfortunately, the explicitness that would be required for exact reproduction is far higher than the norm of what is typically published. This is true even for the most influential studies. I come across this problem regularly in my role as “Registered Replication Report” co-editor for the journal Perspectives on Psychological Science. As editor, I supervise the replication of important experiments, and this often requires extensive work in re-developing experiment software and analysis code on the basis of the summaries typical in journal articles.

In experimental psychology, the main steps of doing an experiment are presenting the stimuli to the participant, collecting the responses, doing statistical analysis of the responses, and creating the plots. If one were to write out every step involved in these, it would take a very long time.

It would certainly take more time than most academics have. Academics today are under tremendous pressure to generate new findings for their next paper, and under little or no pressure to document their processes meticulously.

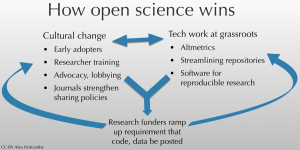

Funder mandates and incentives have been pushing researchers towards making their science more reproducible. This, accompanied by cultural change at the grassroots and at the level of journal editors, is making substantial headway. Efforts at each of these levels reinforce each other in a virtuous circle.

Figure 2 A virtuous circle of action at multiple levels is needed to achieve full reproducibility. “Open science” is a closely related concept

A virtuous circle of action at multiple levels is needed to achieve full reproducibility. “Open science” is a closely related concept.

One facet of the virtuous circle that often goes unappreciated is automation. Automation requires advances in technology. In science, these advances are often achieved by researchers and programmers contributing open source code. Automation has many benefits, allowing it to progress independently of reproducibility incentives and culture.

Automation has of course transformed many industries previously, from the making of telephone calls (no more switchboard operators) to the making of cars (robots do much of the assembly).

Figure 3 An early printing press. While the actual printing is here done by machine, humans must guide the machine through hundreds of steps. Unfortunately, this is reminiscent of how much of laboratory science is done today.

But rather than being a large-scale production system, science is more like a craft. In experimental psychology, each laboratory is doing their own little study, and often doing experiments significantly different than the same lab did a year ago. From one project to the next, the steps can change. If a researcher is doing an experiment or data analysis procedure that they may never have to repeat, there is little incentive to automate it.

It is almost always true, however, that aspects of one’s data analysis will need to be repeated. I refer not only to the need to repeat the analysis for future projects, but rather what one must due to satisfy reviewers. After submission of one’s manuscript to a journal, it tends to come back with peer reviewer complaints about the amount of data (not enough) or the way the data was analysed (not quite right).

This is where I was first truly pleased by having automated my processes – when to satisfy the reviewers, I only needed to change a few lines in my analysis code. Following that, simply clicking “run” in my analysis program resulted in all the relevant numbers and statistics being calculated and all the plots of results being re-done and saved in a tidy folder.

Unfortunately, most researchers never learn the skills needed to automate their data analysis or any other process, at least in psychology. Usually it involves programming. For data analysis, the best language to learn is R or Python.

R has gradually become easier and easier to use, but for those without much programming experience, an intensive effort is still required to become comfortable with it. Python is more elegant, but doesn’t have as much easily-usable statistical code available.

I have begun organising R programming classes for postgraduates at the University of Sydney- here is a description of the first one. I have two main reasons for doing this. Foremost is to empower students, both with the ability to automate their data analyses and with programming skills – useful for a range of endeavours. Second is to make research reproducible, which can only happen if the new generation of scientists are able to automate their processes.

A fantastic organisation of volunteers called Software Carpentry teaches researchers to program. Two junior researchers at the University of Sydney this year completed the Software Carpentry instructor training program – Fabian Held and Westa Domenova. With Fabian and Westa as the instructors, a 2-day Software Carpentry bootcamp is being planned for February 2016, as part of the ResBaz multi-site research training conference.

Unfortunately, formal postgraduate classes have been sorely lacking at nearly every university I have been associated with. And at the University of Sydney as well, we don’t have the financial resources to set up a class with a properly paid instructor. Fortunately, Software Carpentry provides a fantastic low-cost, volunteer-based way to disseminate programming skills. While it would be hard to find volunteer instructors for most types of classes, something about programming seems to brings out the best in people – just have a look at the amazing range of software resources created by the open-source community.

I like to think I am helping create a future where reproducing the research in published psychology articles will not require extensive software development or many manual steps that must be reverse-engineered from a few paragraphs of description in the article. For the data analysis component of projects, if not the actual experiments, one ought to be able to download and run code that is published along with the paper. Aside from being good for the world, that would make my job editing Registered Replication Reports a lot easier.

About the Author

Alex Holcombe is Associate Professor of Psychology and Co-director, Centre for Time, University of Sydney and Associate Editor, Perspectives on Psychological Science

[…] via Going beyond the published article: how Open Access is just a start |. […]